Stop the Steal: How AI Detects Fraud Before You Even See a Charge

Written May 2026

Sandra noticed the charge on a Wednesday afternoon.

$847.50. A sporting goods store in Phoenix. The problem: Sandra has never left Ohio. Sporting equipment isn’t something she buys. The store name meant nothing to her.

The fraud had happened two days earlier. By the time she saw it on her statement, her card details had already moved through a payment processor, cleared a bank, and landed in someone’s account.

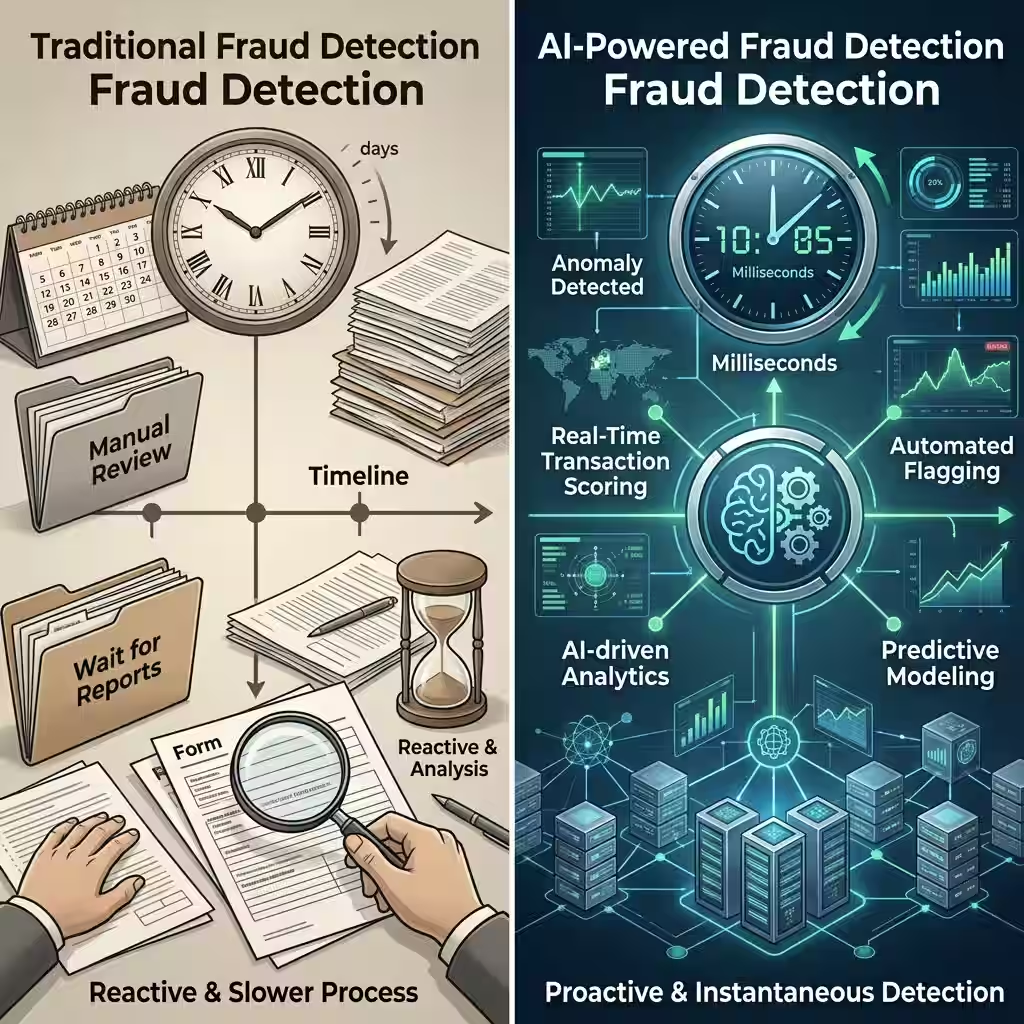

That’s how fraud worked five years ago. By the time you saw it, it was done.

In 2026, that timeline has changed completely. The charge Sandra saw on her statement would now get flagged — in most cases stopped entirely — before it ever cleared. Not because a human analyst caught it. Because an AI fraud detection model identified the transaction as anomalous in milliseconds and blocked it before the merchant even finished processing.

This article explains exactly how that works, what the systems are actually doing, and why it matters more right now than it ever has before.

Nothing here is financial or legal advice. These are facts and observations. Talk to a licensed professional for advice specific to your situation.

Why Fraud Got Worse Before AI Got Better

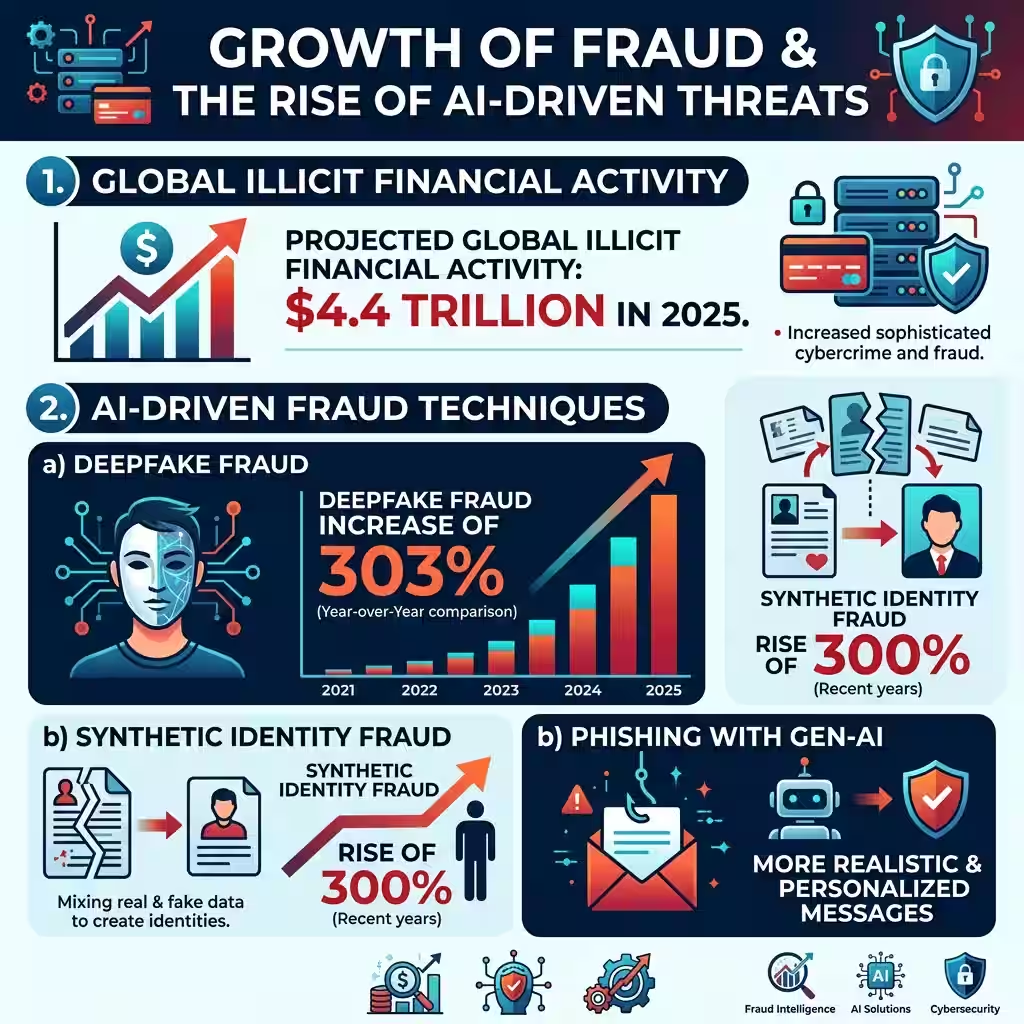

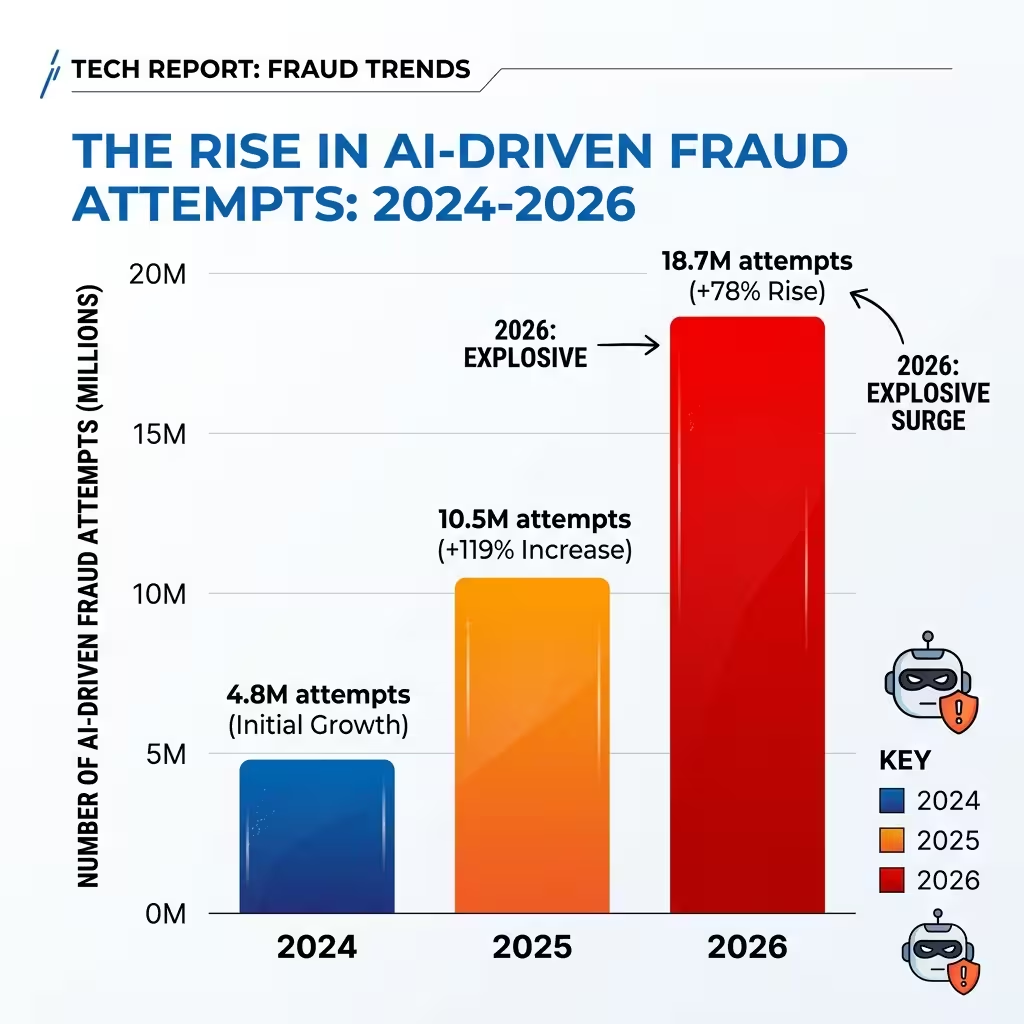

Global illicit financial activity hit $4.4 trillion in 2025. That number grew at 19.2% annually — almost six times faster than the global economy expanded. The people committing fraud aren’t individual hackers in basements. They’re organized, scaled, and using the same AI tools that financial institutions are.

Deepfake fraud attempts increased 303% in the United States in the past two years. Synthetic identity fraud — where criminals generate AI-created identities with realistic documents, photos, and digital histories — has become a primary attack vector at financial onboarding. The percentage of digitally presented media that is entirely AI-generated jumped 300% from previous years.

This is what fraud professionals are actually facing right now. 74% of them report a general increase in online fraud. 75% report a direct rise in AI-driven attacks specifically. The fraud model has industrialized. What used to require significant technical skill is now available as “scam-as-a-service” — packaged scripts, fake infrastructure, and deepfake tools sold to anyone willing to pay.

For banks and card networks, the arms race is genuine. The only way to fight AI-driven fraud at scale is with AI-driven detection at scale.

How Real-Time Transaction Monitoring Actually Works

The first thing to understand is the speed.

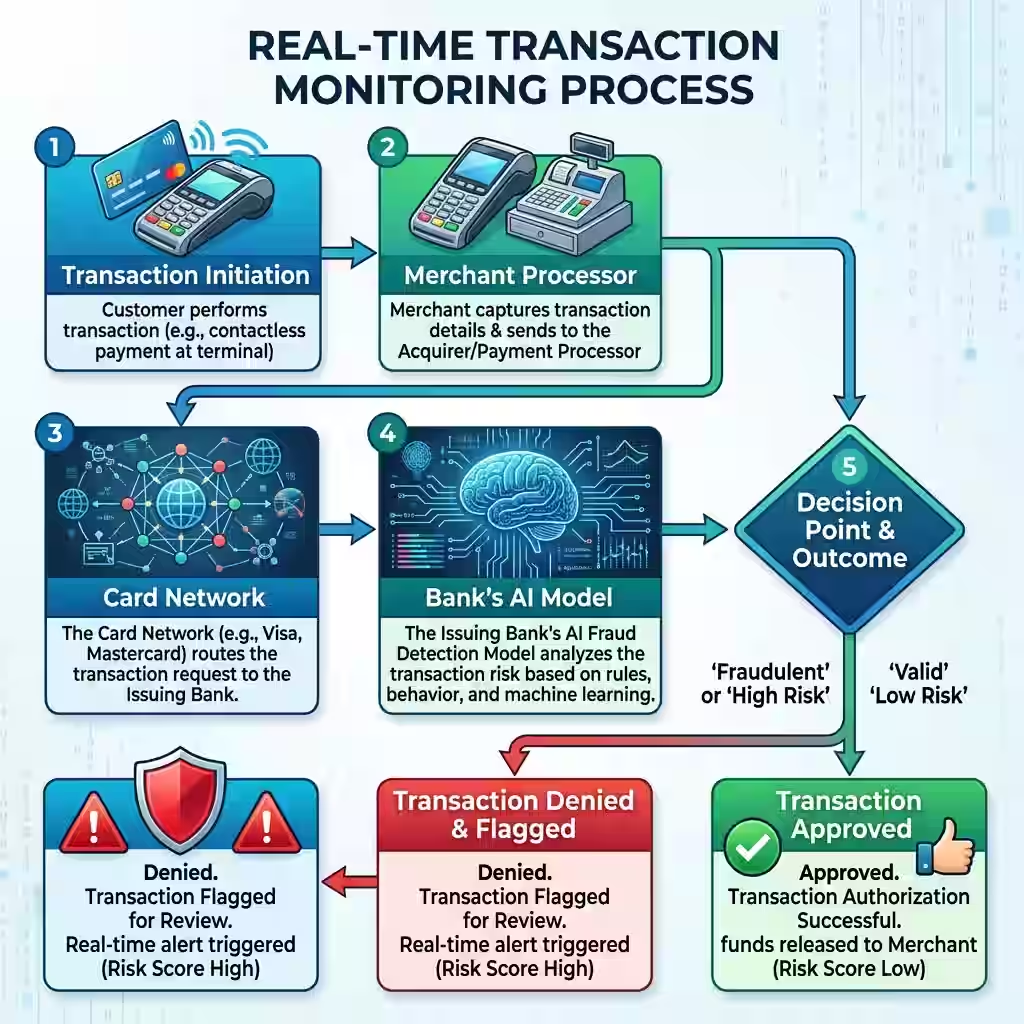

When you tap your card, a transaction request goes to the merchant’s processor, then to the card network, then to your bank’s authorization system. That entire chain — from tap to approval — typically takes between 1 and 3 seconds. The AI fraud model has to run inside that window. Usually in the first few hundred milliseconds.

What the model does in those milliseconds is anomaly detection. It compares the transaction against your behavioral baseline — everything the system has learned about how you actually spend. Your usual merchants. Your typical purchase amounts. The cities where your card normally appears. It’s a detailed picture built up from every transaction you’ve made through that institution.

The transaction Sandra’s card processed wasn’t stopped because someone recognized the Phoenix store as fraudulent. It was stopped because the model calculated that a sporting goods purchase in Phoenix for $847 was several standard deviations outside Sandra’s normal behavior — and the model assigned it a risk score that triggered a block.

That behavioral model isn’t static. Machine learning fraud models update continuously. Every transaction you make — even the ones that are completely normal — refines the model’s picture of you. The model isn’t just asking “is this transaction in a risky category.” It’s asking “does this transaction look like something this specific person would do.”

The Deepfake Problem — When AI Is the Attacker

Real-time transaction monitoring is excellent at catching behavioral anomalies. It’s weaker against a different attack: the one where the person authenticating is synthetic.

Synthetic identity fraud works by building a fake person rather than stealing a real one. Fraudsters use AI to generate a complete identity package — a realistic photo, a fabricated document, a credit history built up over months or years using “authorized user” additions. When that synthetic identity applies for a card, there’s no real person to behave anomalously. The fraud is baked in at onboarding.

Biometric authentication was supposed to close this gap. Face recognition. Voice matching. Fingerprints. All things that belong to one real person. But deepfakes have been forcing a recalibration. Synthetic video can now pass basic liveness checks. Voice cloning fools audio systems. The attacks are moving faster than most single-technology defenses can track.

The most effective response in 2026 is multimodal — combining multiple signals rather than relying on one. SEON, one of the leading fraud detection platforms, analyzes digital footprints across 50+ social sources to verify that an identity has a real, consistent online history. An AI-generated identity has clean documents but usually lacks the messy, organic pattern of real digital behavior. That gap is where detection happens.

Featurespace uses Adaptive Behavioral Analytics to do something different — it doesn’t look for signs of bad behavior. It looks for deviations from good behavior. A real customer has consistent patterns. Even their unusual purchases follow predictable irregularities. A fraudster using a stolen or synthetic identity creates a different kind of irregularity — one the model learns to distinguish.

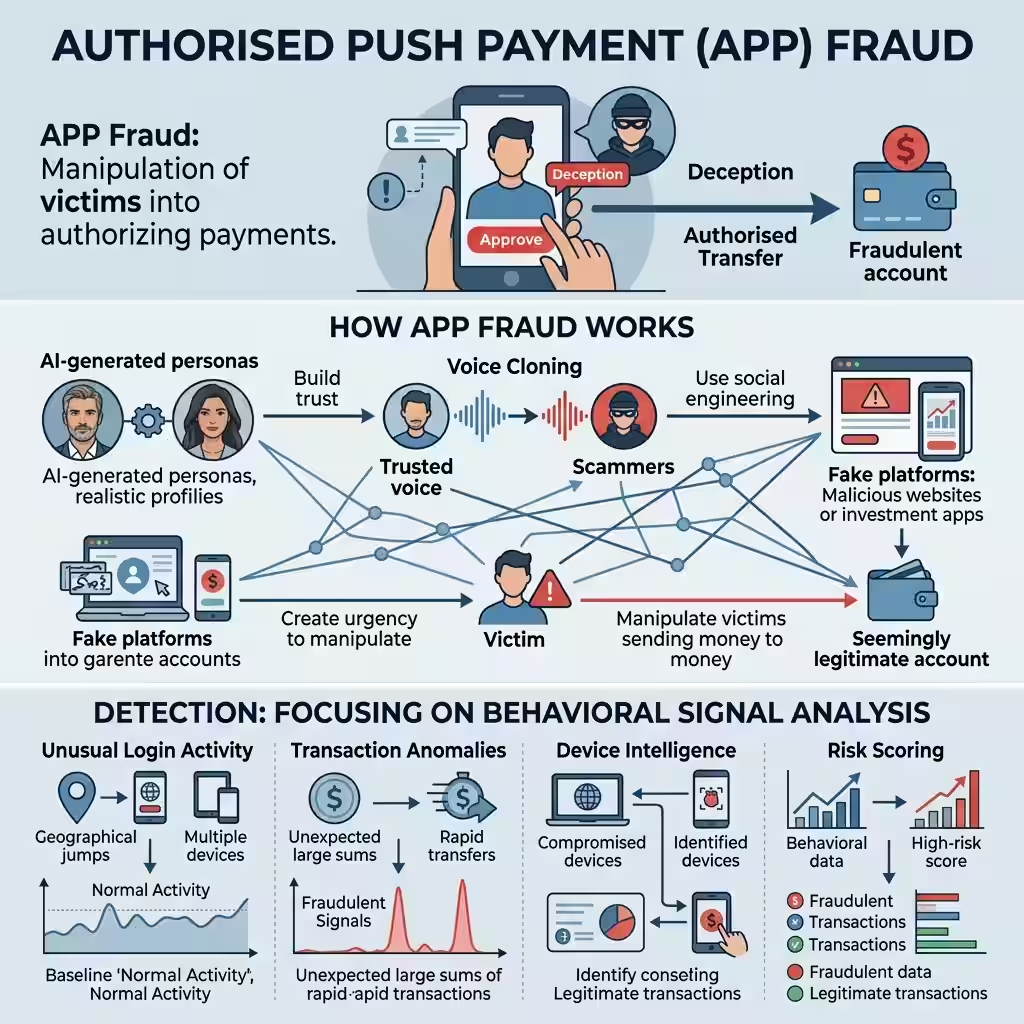

APP Fraud — The Attack That Bypasses All of This

Here’s the type of fraud that keeps detection engineers up at night.

Authorized Push Payment fraud — APP fraud — doesn’t steal your credentials or fake your identity. It manipulates you into authorizing the payment yourself. You move the money. From your account. On your own device. To a criminal’s account.

From a technical standpoint, nothing looks wrong. The authentication is real. The device is yours. The location matches your history. Standard anomaly detection has nothing to flag.

APP fraud uses AI to scale the deception. A kidnapping call where the voice on the line sounds exactly like your child. A romance that’s been building for three months through an AI-generated persona. A fake investment platform where the testimonials, the portfolio screenshots, and the “advisor” calling you are all synthetic. The deception lives in the relationship, not the transaction — which is why the charge looks completely clean.

The most promising detection approach is behavioral signal analysis inside the transaction itself. Someone being manipulated into a large transfer behaves differently before they confirm it. They pause longer than usual. They navigate back and forth. They spend time on a screen they normally breeze past. Featurespace and Fraudio both run models that track these micro-signals in real time — looking for a pre-confirmation pattern that doesn’t match how that specific customer normally authorizes a transfer.

That’s a genuinely difficult technical problem. The signal is subtle. It requires enough transaction history to build a behavioral baseline for the pre-confirmation flow, not just the transaction itself. But it’s the direction the field is moving because it’s the only detection layer that sits between the manipulation and the transfer.

The Scale Problem — Why No Single Institution Can Solve This Alone

One of the more important developments in AI credit card security in 2026 is the shift toward consortium models.

A single bank’s fraud model can only learn from the transactions that flow through that bank. A criminal who tests a stolen card at a small credit union, then uses it at a regional bank, then runs the actual fraud at a major card network — each institution sees only its own slice of the pattern. None of them sees the full picture.

Fraudio’s “AI Brain” network model connects multiple institutions’ transaction data into a shared detection layer. The performance gain reported is significant — up to 30x better detection than siloed models. When one institution flags a pattern, the network-wide model updates for every connected participant.

The Global Signal Exchange (GSE) operates on a similar principle at the infrastructure level. It’s a cross-sector clearinghouse where platforms, banks, and telecom companies share verified scam signals — fraudulent URLs, phone numbers, domain patterns — in real time. When a scam campaign is identified, the indicators propagate across the network before the campaign has run its full course. The strategy is to “move left” on the attack chain — disrupting the infrastructure before victims are engaged.

The Australian Financial Crimes Exchange (AFCX) built this into what they call the Anti-Scam Intelligence Loop (ASIL). Verified scam indicators go out to telecom carriers who block numbers. They go to digital platforms who remove ads. The criminal campaign finds its reach blocked at the distribution layer before the money-movement phase begins.

This is the most important structural shift in AI fraud detection right now. The arms race isn’t just about better models. It’s about whether defenders can share intelligence fast enough to outpace attackers who already share infrastructure.

Chargeback Prevention — What Happens After the Fraud Anyway

Not all fraud gets stopped in real time. Some of it makes it through. What happens then matters too.

Chargeback prevention AI operates after the transaction — analyzing patterns in disputed charges to identify which merchants, transaction types, and approval patterns are most associated with fraud that slipped through. The goal is a feedback loop: each fraud event improves the model that stops the next one.

Riskified takes this to an unusual commitment — a 100% chargeback guarantee for e-commerce merchants. When their model approves a transaction and fraud occurs anyway, Riskified absorbs the loss. That’s not a marketing position. It’s a financial bet on the accuracy of their detection model. They only make that bet sustainable if the model is genuinely catching most of the fraud.

For consumers, the most important practical effect of chargeback prevention AI is faster resolution. When a dispute is flagged, AI systems can cross-reference the transaction against known fraud patterns, determine whether the dispute fits a recognized attack signature, and in many cases auto-resolve the claim without weeks of manual review. Sandra’s $847 charge would be reviewed, matched against a pattern, and reversed in hours rather than weeks.

People Also Ask – PAA’s

How does AI detect credit card fraud before it happens?

AI fraud detection runs inside the transaction authorization window — usually in under a second. It compares each transaction against a behavioral model built from your transaction history. If the transaction is anomalous enough — wrong location, wrong merchant category, wrong amount relative to your patterns — the model assigns a high risk score and the transaction is declined or flagged for review. This happens before the charge clears.

How can I use AI to protect my bank account from hackers?

Most major banks and card networks already have AI fraud detection running on your account automatically. Enabling real-time transaction alerts is the consumer-side action that works best — it closes the gap between when fraud is detected and when you’re notified. Strong biometric authentication for your banking app adds a second layer, though deepfake attacks on voice and face authentication are improving. Using a card with zero-liability fraud protection shifts the financial risk to the institution.

What is anomaly detection in fraud prevention?

Anomaly detection means the AI is looking for behavior that doesn’t match the established baseline for a specific account. Your spending patterns are unique enough that a significant deviation — buying sporting equipment in Phoenix when you’ve never left the Midwest — stands out clearly. The model doesn’t need to know the Phoenix store is fraudulent. It just needs to know this transaction doesn’t look like you.

What is APP fraud and can AI stop it?

Authorized Push Payment fraud is when you’re manipulated into sending money yourself. It doesn’t steal credentials or spoof your identity — it deceives you directly. Standard anomaly detection often misses it because the transaction is technically legitimate. Newer systems that monitor behavioral signals before the transfer confirmation — hesitation patterns, navigation anomalies — can flag these transactions. But this type of fraud is still the hardest for AI to stop reliably.

Is my financial data safe with AI fraud detection systems?

AI fraud detection uses your transaction data to build behavioral models. Most major institutions use encrypted, anonymized data for this — the model learns behavioral patterns without retaining identifiable transaction records beyond regulatory requirements. The risk is not from the fraud detection system itself but from the broader security posture of the institution and its vendors, which is why third-party risk management matters as much as the detection technology.

What Happened to Sandra

Her bank’s system flagged the Phoenix transaction. Held it before it cleared. Sent her a real-time alert on her phone. She confirmed it wasn’t her. The charge was blocked.

The fraudster never got the money. The whole thing resolved in about four minutes.

She didn’t know any of this was happening. She found out when the alert came through. By then the AI had already done the work.

That’s what AI fraud detection looks like in practice. Not a surveillance system watching your purchases. A model that has built a picture of your financial behavior and gets suspicious when something doesn’t fit.

Global illicit financial activity is growing at 19% annually. The fraud is industrialized and AI-powered. The detection is too — which is the only reason the math still works for anyone.

Your card’s fraud model is running right now. Every transaction you make is training it further. By the time the next Sandra tries to steal from you, the system will know your patterns well enough to stop it before you ever see the charge.

About the Author Marcus Delray covers AI in financial services, cybersecurity, and fraud detection. He has written about fintech platforms and financial crime prevention for nine years.

Disclaimer: General information only. Not financial, legal, or security advice. All statistics, incidents, and platform descriptions are based on available public data as of May 2026. The author and publisher accept no liability for outcomes based on this content.