AI Smart Contract Auditing: How Machine Learning Is Catching the Bugs That Cost DeFi Billions

So, 2025 was kind of a disaster for DeFi security. Not in a dramatic, unexpected way — more in the way where you watch something unfold and think “yeah, we probably should have seen that coming.” $3.4 billion walked out the door. And before you picture some elaborate heist with novel attack vectors nobody had seen — nah. Mostly reentrancy bugs. Access control failures. The stuff that’s been sitting in security guides since like 2016.

That’s the part I keep getting stuck on. Not the dollar amount. The fact that we knew. The fixes existed. There are blog posts, audits, even conference talks specifically about these bug classes. And protocols still got drained.

I’ve sat through a few post-mortem calls over the past year and the story is almost always the same. Audit happened. Audit was probably fine. Then the team shipped new features, a dependency got updated, market conditions changed — and suddenly the audit is describing a slightly different protocol than the one actually running on mainnet. Nobody noticed the drift until it was too late.

That’s where AI-powered monitoring and auditing comes in. Not as some cure-all, because it isn’t. But as a way to keep watching after the audit is done — which, it turns out, is when most of the real risk shows up.

Nineteen Seconds. That’s the Number That Got Me.

Okay so here’s the case study everyone in this space keeps citing and honestly, it deserves the attention. Neruduab Finance got probed by a flash loan reentrancy attack last year. Before the attack could complete, their AI monitoring system flagged it. A circuit breaker kicked in automatically. The whole thing — attack starts, detected, stopped — happened across three blocks. Nineteen seconds. $40 million didn’t move.

Nineteen. Seconds.

Now contrast that with what happens when a human team is in charge of response. And I’m not trying to be harsh here — security analysts are good at their jobs. But when an alert fires, there’s a process. First you check if it’s actually real and not another false positive, because false positives are constant. Then you figure out the severity. Then someone with authority has to make a call on what action to take. Then you act. On a fast day with everyone awake and paying attention? Maybe ten to fifteen minutes. Realistically? More like twenty to thirty.

A flash loan attack completes in one transaction. One block. Probably under thirty seconds from start to finish. By the time a human team even confirms the alert is real, the money is already gone and being routed through a mixer somewhere.

That math — 19 seconds AI vs 20+ minutes human — is why continuous AI monitoring stopped being a nice-to-have and became table stakes for protocols that take security seriously. Forta, Hypernative, Hexagate — these platforms watch live contract behavior around the clock, not as scheduled scans. When they’re paired with on-chain circuit breakers, like ERC-7265 implementations that auto-freeze funds or cap withdrawals when an anomaly hits a threshold, the response doesn’t even require a human in the loop. The contract protects itself. Neruduab’s team saved $40 million without needing to be awake at the right moment. That’s kind of the whole point.

What AI Auditing Actually Does — Because the Pitch Decks Are Useless

Okay, “AI-powered” is on literally everything in security right now. Half the time it means almost nothing. Let me try to actually explain what the legit version looks like.

Reading Intent, Not Just Syntax

Regular static analysis scans code without running it and flags patterns that match known vulnerability classes. It’s a smart Ctrl+F, basically. The AI version layers NLP on top — the system tries to understand what the developer was actually going for, not just what the code literally says. This matters because tons of real bugs come from code that’s technically valid, compiles fine, passes basic checks — and still does something the developer never intended. Pure pattern-matching misses those. NLP-enhanced analysis catches a lot more of them because it’s reading for meaning.

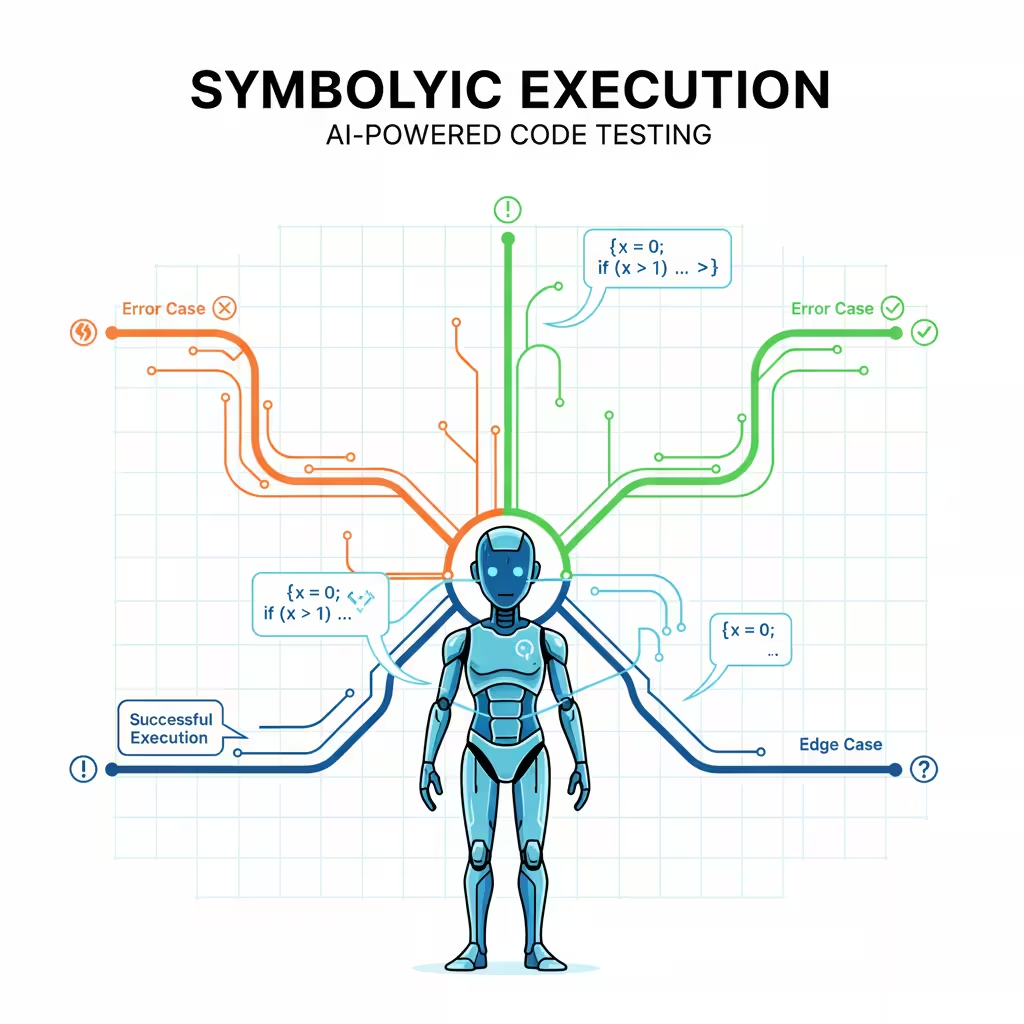

Symbolic Execution: Covering Every Path at Once

This one genuinely impresses people when they understand what it’s doing. Instead of picking test inputs and running through scenarios manually — the way a human QA person would — symbolic execution mathematically explores every possible execution path through the contract simultaneously. Every branch, every input combo, all at once. Then AI-guided fuzzing piles on with adversarial inputs specifically designed to stress that particular codebase. The ground a human team couldn’t cover in a week, these tools work through overnight. That’s not a marketing claim — that’s just the math of how many paths need testing.

Numbers Worth Knowing

King Fahd University of Petroleum and Minerals published research that’s become the reference point in this space. Their hybrid model — CodeBERT embeddings for semantic understanding of Solidity, layered with pattern-based feature vectors — hit 98.3% accuracy on reentrancy detection using the Autoencoder-LSTM version. 98.01% with the CodeBERT-Transformer Encoder. And look, reentrancy isn’t some obscure edge case. It’s been draining protocols since The DAO hack in 2016. Getting automated detection to 98% on that bug class specifically is a real milestone, not a lab curiosity.

EVMbench: The One Benchmark That’s Not Self-Serving

Security vendor benchmarks are, with a few exceptions, completely useless for comparison. Every company runs tests where their product wins. It’s almost a genre at this point. OpenAI and Paradigm built EVMbench because someone needed to create an external standard that vendors couldn’t quietly optimize around.

Three modes. Detect — find vulnerabilities across 120 curated scenarios. Patch — actually fix the vulnerability without breaking everything else in the contract, which sounds easy and isn’t. Exploit — execute a fund-draining attack in a sandboxed environment to prove the vulnerability is real and not theoretical hand-waving.

GPT-5.3-Codex scored 72.2% in exploit mode. GPT-5 one generation back scored 31.9%. More than doubled in a single model cycle. Worth noting that this improvement applies to both defensive and offensive capabilities, because the same models power both sides of this equation.

Post-Launch Is When Protocols Actually Get Exploited

Go through the 2025 exploit list and count how many of those protocols had pre-launch audits. Most of them. Plenty had recent ones. The audit isn’t usually where the story goes wrong — it’s the assumption that the audit is where security ends.

After a protocol launches, things change constantly. New features go in. Integrations get bolted on. Libraries update — sometimes with changelog entries nobody reads. Market conditions shift and create attack paths that didn’t exist when the original code was written. Specific TVL levels that enable flash loan strategies. Liquidity conditions that make oracle manipulation viable. Incentive structures that nobody stress-tested at scale. The contract is frozen in time. The environment it operates in isn’t.

The teams that consistently avoid getting hit treat security like observability — as a continuous property of the running system, not a gate you pass through once. Security checks in the deployment pipeline. Behavioral baselines on live contracts. Alerts that surface anomalies early. That posture is fundamentally different from “we did an audit before launch.”

Platforms That Have Actually Earned It

AuditOne is interesting because their model is pretty different from the typical “schedule a scan” approach. Their audit agents run on-chain continuously across networks including IOTA and BASE, paid for in $AUDIT token. The product isn’t an audit event — it’s persistent coverage that doesn’t stop after the report is filed.

Forta, Hypernative, and Hexagate are the names that keep coming up when you look at incidents where protocols almost got hit but didn’t. That’s the most credible endorsement available — you appear in near-miss stories, not just disaster post-mortems. They run behavioral monitoring on live contracts and escalate anomalies early enough to act on.

Tools like Cuechain push security further upstream into the development process itself. You catch the bug while writing the code rather than after deploying it. Way cheaper, way less stressful. Most teams only adopt these after experiencing an incident, which is annoying but genuinely human.

Trust Is the Product — XAI Is How You Build It

Here’s an underrated DeFi problem. Smart contract code is technically public — it’s on the blockchain, anyone can pull it up. But it’s written in Solidity. The overwhelming majority of people using DeFi protocols are not Solidity developers. The transparency that gets marketed as a feature is functionally meaningless to most actual users.

Researchers call this the black box problem, and it’s a real trust killer. When an automated system flags your transaction or freezes your withdrawal and you have zero explanation you can actually understand, the reaction isn’t “fascinating, I wonder what the model detected.” It’s suspicion, then fund withdrawal, then you’re gone. Qualtrics put hard numbers on this in 2025 — trust is the primary driver of financial decisions in digital markets. Not returns, not fees, not UI quality. Trust. DeFi has always struggled with it.

Explainable AI — XAI — is the direct fix. Tools like SHAP map which specific factors drove a model’s decision into language that doesn’t require technical knowledge to parse. For a protocol front end, that might surface as: withdrawal flagged because the amount is four times your 90-day average, the destination wallet is nine minutes old, and that same address triggered alerts on two other protocols earlier today. A user can actually evaluate that. Maybe they still think the flag is wrong — fine, that’s a legitimate disagreement. But they’re not just staring at a frozen screen with no context. That difference matters enormously for trust.

Regulators are pushing this direction too, for what it’s worth. The FCA is requiring decision transparency from financial services. XAI gives DeFi a path to compliance that doesn’t require centralizing the architecture.

The GENIUS Act — Boring Name, Real Impact

Years of U.S. regulatory ambiguity in crypto had a cost that mostly showed up in what didn’t happen rather than what did. Institutional capital stayed light because legal teams couldn’t get clean answers about applicable rules. Solid projects quietly failed because they couldn’t secure basic banking or get legal clarity on their structure. Nobody was writing headlines about it — it was just a slow, quiet drain on the ecosystem.

The GENIUS Act cleared most of that up. Real framework, consistent enforcement, standards people could actually build to. Capital that had been waiting on the sidelines started moving. Market participation shifted noticeably within a quarter of passage.

The sectors pulling that energy right now: Real-World Asset tokenization is the biggest story — Securitize, Figure, and Ondo Finance are already doing meaningful volume in private equity digitization, blockchain home equity products, and tokenized U.S. Treasuries. AI-powered agentic commerce protocols are growing fast. High-performance compliant trading infrastructure is attracting serious investment. The regulatory clarity didn’t just lower legal risk — it opened market categories that had been effectively closed off.

The Part Nobody Wants to Say

Defensive AI and offensive AI pull from the same toolbox. There’s no special version of these capabilities reserved for security teams. Malicious actors are already using AI to automate vulnerability scanning at scale. Reconnaissance that took skilled attackers days of manual work can now be partially automated. The exploitable surface area of a given protocol hasn’t changed — the speed of finding weak spots in it has gotten faster on both sides.

Post-quantum cryptography is worth planning for even if the timeline feels abstract. Most DeFi infrastructure relies on encryption standards quantum computing will eventually compromise. Nobody can give you a reliable ETA — I’ve heard everything from five years to twenty — but migration costs compound the longer you wait. Teams moving toward quantum-resistant standards now are making a bet that’s almost certainly right regardless of timing.

The security side is genuinely improving. So is the attack side. The teams that do well long-term are the ones that operate like they understand this is a continuous competition, not a problem you solve once.

Safety Assumptions Have an Expiry Date

The Forbes Technology Council came up with a framing I actually use when talking to founders now: treat every deployed contract as code whose safety assumptions have an expiry date. The code itself is permanent — blockchain keeps it forever. The reasoning behind it was valid for a specific moment, specific market conditions, a specific threat landscape. All three of those keep evolving.

Safe at launch can mean vulnerable at month 16. New attack techniques get documented and shared. Dependencies change. Market conditions create attack vectors that simply weren’t viable when the contract was designed. Most serious DeFi exploits aren’t sophisticated novel zero-days — they’re old code colliding with a changed environment.

The protocols with the best long-term records treat security like infrastructure maintenance. Pre-launch AI audits. Continuous post-deployment monitoring. Circuit breakers that are actually tested, not just set up and forgotten. Architecture reviews on a real schedule. Not paranoid — just accurate about how exposure compounds when you’re not watching.

Where This Leaves Things

Hybrid is the right model and it’s pretty much settled at this point. AI for scale and speed — watching thousands of contracts simultaneously, responding in seconds, consistent quality without fatigue. Humans for architecture review, novel threat identification, and the kind of judgment that only comes from watching systems fail in unexpected ways.

98.3% automated reentrancy detection is a meaningful number. A senior auditor spotting that a liquidation mechanism creates a drain incentive under specific market conditions that the code doesn’t account for — that’s a completely different kind of expertise. Both matter. One doesn’t replace the other.

One pre-launch audit and then moving on — that’s not a security strategy anymore. The protocols that last treat security as something running continuously alongside everything else.

The bugs are in the code right now. Who finds them first is the only variable.

Key Takeaways

→ $3.4B lost in 2025 to boring, well-documented, preventable bug classes.

→ AI deep learning hits 98.3% accuracy on reentrancy detection.

→ AI responds in ~19 seconds. Human teams take 15–30 minutes. That gap is the whole problem.

→ Post-launch active defense — continuous monitoring plus circuit breakers — is now the floor.

→ XAI makes model decisions readable. Trust is the real DeFi adoption ceiling.

→ GENIUS Act resolved U.S. regulatory ambiguity and unlocked institutional capital.

→ Attackers use the same AI. This is a race with no finish line.

→ Safety assumptions expire. One pre-launch audit is not a security strategy.