Is Your Bank Data Safe? 5 Critical Ethics Questions for the AI Age

Written May 2026

Marcus almost missed it.

It was a bank newsletter. Third paragraph down. Buried between two announcements about new app features. The sentence said the bank’s AI tools would use his transaction data to provide “personalized recommendations.”

No opt-out link anywhere. The phrase “personalized recommendations” had no definition attached to it. Whether his data was staying inside the bank or going somewhere else — the email didn’t say.

He spent 45 minutes trying to find a privacy settings page. Never found one.

I hear this story a lot. Financial AI is moving faster than anyone is explaining it. Banks and fintech apps are connecting to the most sensitive parts of your financial life — your full spending history, your income, everything you owe — and most people have no clear picture of what actually happens to that data once it’s in the system.

I wrote this because the gap is getting wider. Not to scare you. To give you five questions worth knowing the answers to before you connect any financial tool to your bank account.

Nothing here is financial or legal advice. Talk to a licensed professional before making major decisions about your finances or privacy.

Question 1 — Is the App Actually Seeing Your Bank Account, or Just Your Transactions?

Most people assume these are the same thing. They’re not.

There are two ways a financial app connects to your bank. The difference between them is significant.

The safe way is read-only API access. The app connects through a service like Plaid, Finicity, or MX. What the app gets is a feed of your transaction history — what you bought, where, and when. Moving money isn’t possible. Initiating transfers isn’t possible. The app is a viewer, nothing more.

The risky way is credential sharing. You hand over your actual bank username and password. The app signs in as you. What it can do from there depends on how carefully it was built — and what happens if it gets compromised.

The Vercel breach in April 2026 showed how fast this goes wrong. One employee gave broad Google Workspace permissions to an AI tool. A malware infection sat undetected for two months. The eventual extortion attempt hit $2 million.

Your first question for any financial app: does it use a regulated, read-only data aggregator, or does it want your login credentials? If it’s the latter — find a different app.

Question 2 — Could Your Data Be Used to Train the AI?

This is the question that gets buried in paragraph 14 of a 47-paragraph terms of service.

When you connect your bank account to an app, your transaction data doesn’t sit in a sealed box. Depending on the platform’s policies, it may be used to train the AI model — feeding the system new patterns to learn from, making it smarter for every user including you. Your actual financial behavior becomes training material. That process runs on your PII.

In 2026, regulators got specific about this. Updated Regulation S-P requires financial firms to verify their AI vendors don’t use client data to train public models. Smaller RIAs under $1.5 billion in assets had a hard deadline of June 3, 2026. That standard applies to firms, not to individual consumers using personal finance apps. But it tells you where the regulatory consensus landed: your data should not train AI without your clear, informed consent.

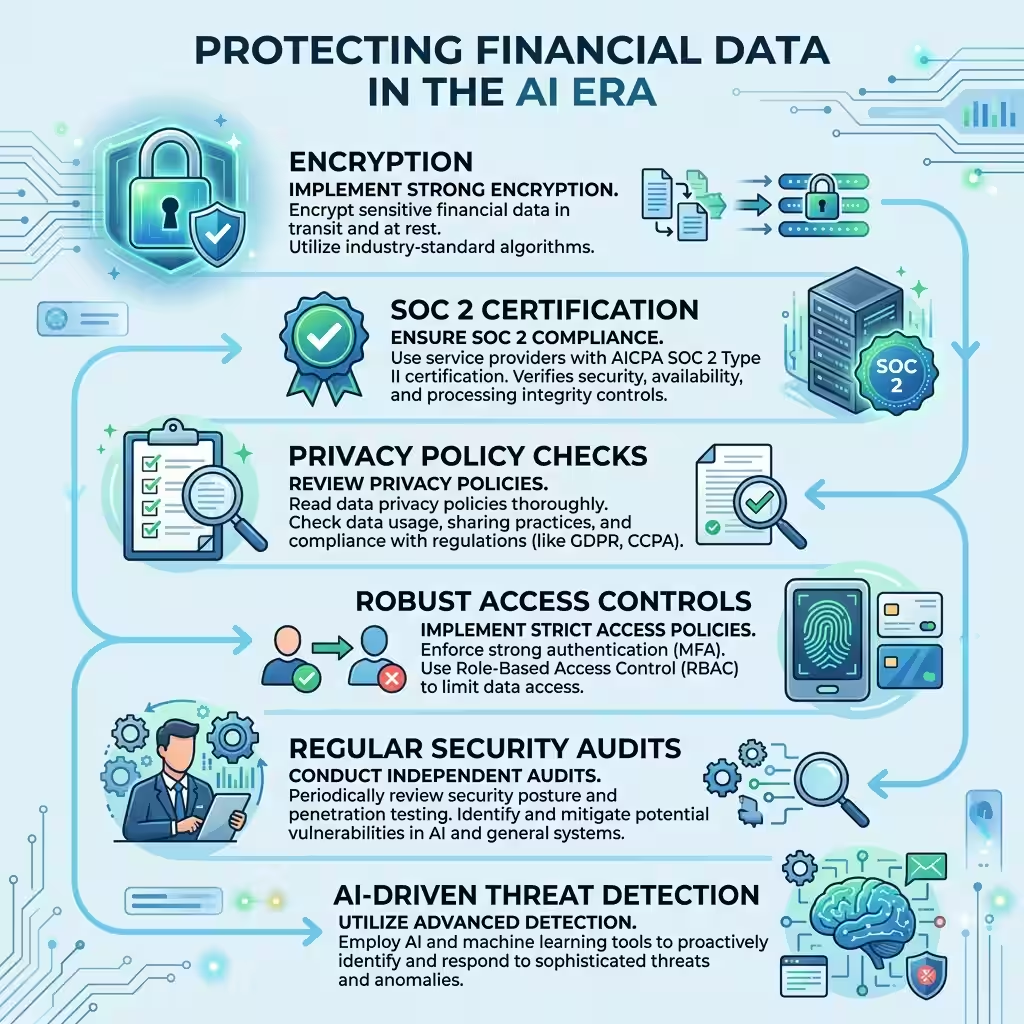

Two things to check before trusting a platform with your account. Does it carry SOC 2 Type II certification? That’s an ongoing independent audit of how data is actually handled — not a one-time submission. And does its privacy policy say directly that your data is never used to train external models? If the policy doesn’t answer the question clearly, the answer is no.

Question 3 — What Happens When the AI Gets It Wrong?

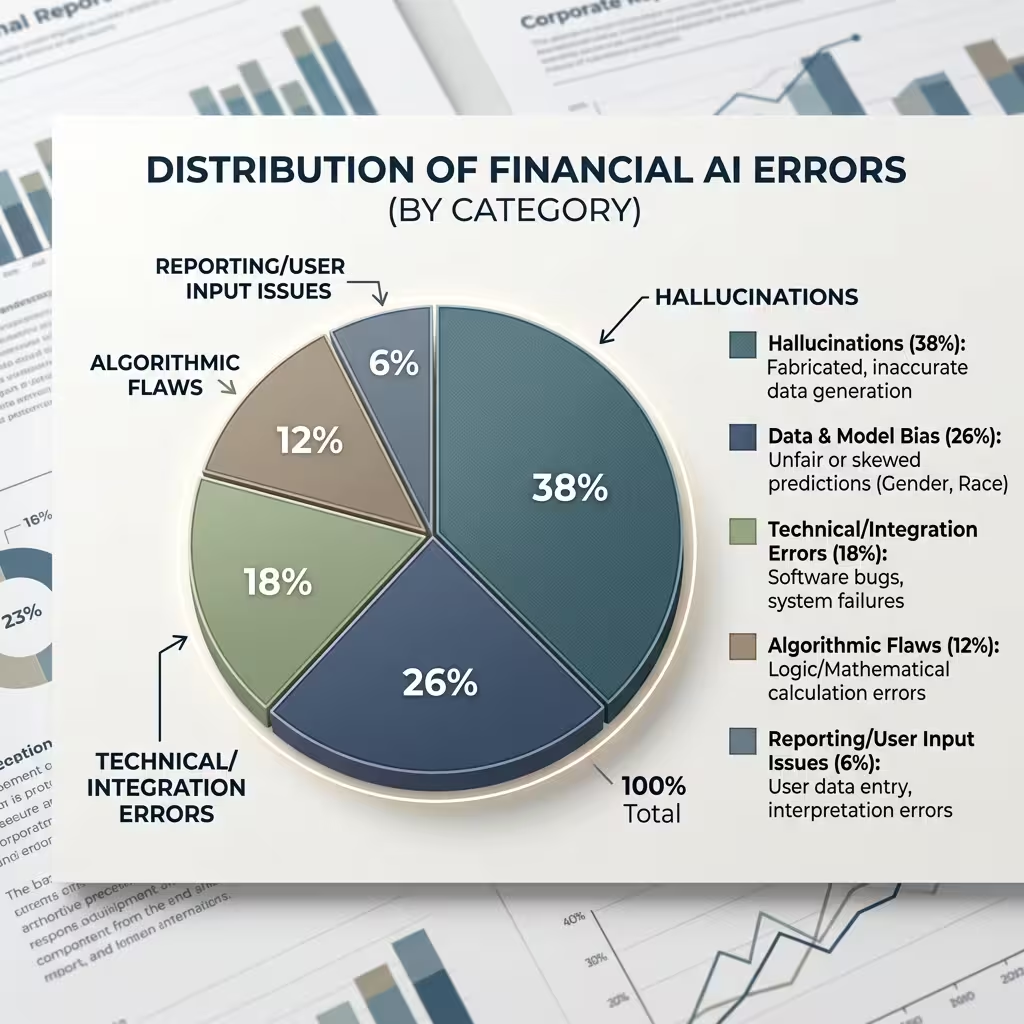

AI makes mistakes. In financial contexts, hallucination rates run between 3% and 27% depending on the system. A confidently formatted wrong answer looks identical to a correct one. That’s not a minor technical issue when the wrong answer affects your credit.

The CFPB got specific here. When an AI system issues an adverse action — a credit denial, a rate increase, a reduced limit — the explanation given to the consumer must be specific to their actual situation. Not a generic checkbox form. The real factors that drove the model’s decision. Even if the company running the model can’t fully explain how the model works internally.

So if you get denied for credit and the reason letter is vague, generic, or model-generated with no specifics — push back. The regulatory standard requires real specificity. You have the right to know what actually drove the decision.

The accountability question matters here too. When AI harms someone financially — through a biased credit score, an incorrect fraud flag, a bad recommendation — who carries the liability? In 2026, the answer is consistent: the institution that deployed the model. Not the vendor who built it. Under the April 2026 joint guidance from the OCC and Federal Reserve, third-party AI models must meet the same validation and governance standards as internal ones. The firm that licensed the tool carries the responsibility for what it does.

Question 4 — Are You Being Discriminated Against Without Knowing It?

This is the question that gets the least media coverage. It carries some of the largest real-world consequences.

AI credit models use data to build predictions. The problem is that some variables which look neutral actually aren’t. ZIP code is the clearest example. Sounds reasonable — location-based financial data. But ZIP codes correlate with race and national origin in ways that produce discriminatory lending results when the model runs them.

Education level works the same way. The variable seems fine until you map it against who gets denied and who doesn’t.

This is what regulators call proxy discrimination. The Equal Credit Opportunity Act (ECOA) prohibits it. And crucially, financial institutions remain responsible for discriminatory AI outcomes even when the model came from a third-party vendor and the bias was unintentional. The company that ran the model on you owns the outcome.

California and New York both expanded their requirements in 2026. Consumers in those states have more explicit rights to understand and challenge automated financial decisions. For everyone else, the practical move is the same wherever you live: when an AI system makes a decision about your credit or account, ask for specific reasons. If you get a generic model-generated score with no detail, request more. The regulatory standard says they have to provide it.

Question 5 — What Are the Real Breach Risks and What Actually Protects You?

Here’s what most people get wrong about cybersecurity in 2026.

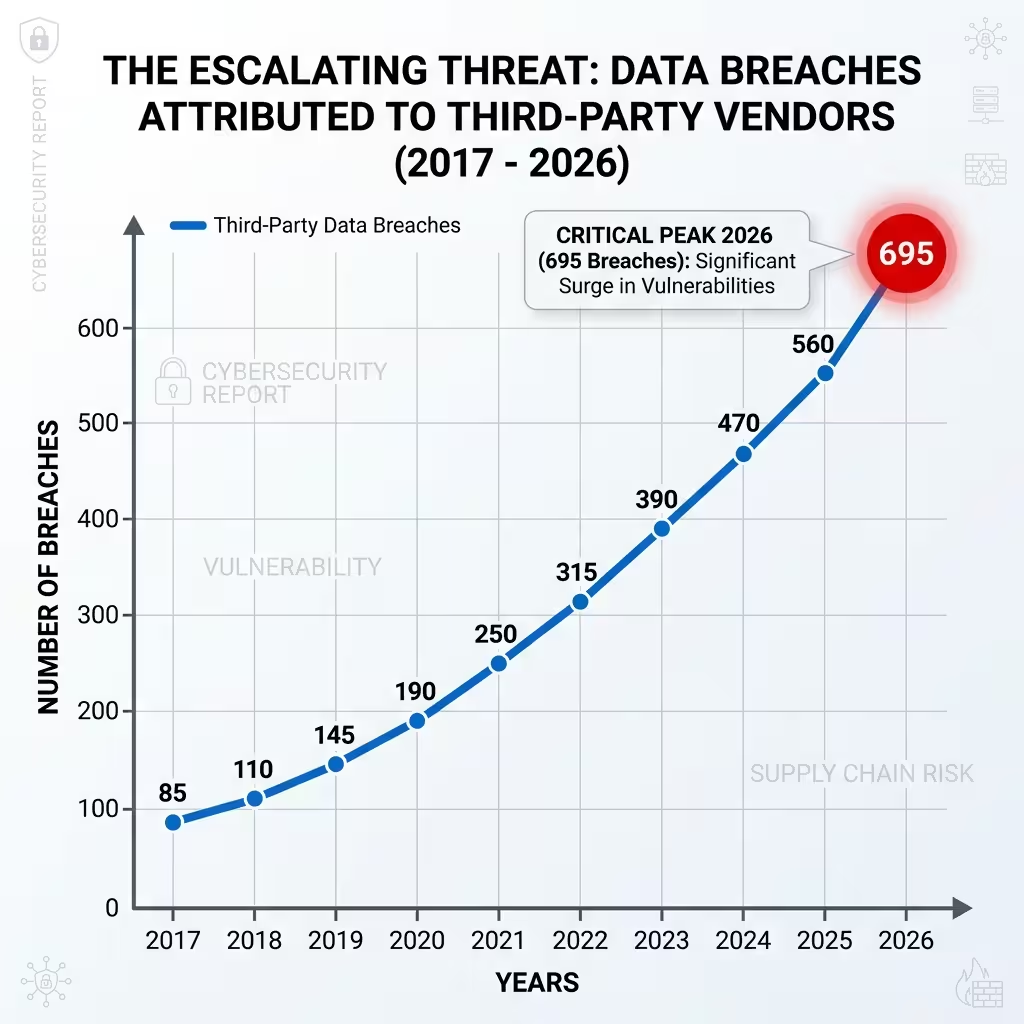

The big breaches aren’t coming through the front door anymore. Hackers aren’t breaking through bank firewalls. They’re finding vendors, BPO contractors, and OAuth-connected apps with access to the institution’s data — and walking in through those side connections.

Three examples from early 2026. Citizens Financial and Frost Bank lost 3.4 million records when the Everest ransomware group hit a shared third-party vendor. Adobe lost 13 million customer support tickets through a phishing attack on a third-party BPO firm in India — one support agent could pull millions of records in a single query. France Titres had 11.7 million identity card records exposed through an undisclosed third-party pathway.

In each case, the primary institution had reasonable security. The attacker found a weaker partner in the ecosystem.

For individual consumers, this changes what protecting yourself actually means. The question isn’t “is my bank secure?” It’s “what third parties does my bank share data with, and how secured are those third parties?”

On encryption: in nearly every 2026 incident, the stolen data was unencrypted when it was taken. Persistent encryption — where data stays encrypted as it moves through systems, not just while sitting in one place — would have made most of those records useless after exfiltration. When evaluating any app for open banking safety, ask whether it uses encryption that travels with the data, not just encryption at rest.

On decentralized finance (DeFi): the privacy risks are different, not smaller. No central database means no single honeypot for attackers. But the flip side is equally real — no regulatory framework, no consumer recourse when something goes wrong, and data consent practices that vary wildly from platform to platform. Most DeFi privacy failures come from wallet exposure and smart contract vulnerabilities rather than institutional database breaches. PII protection is inconsistent across platforms. “Decentralized” and “private” are not the same thing.

The One Fraud Pattern That Bypasses All of This

There is a specific type of financial fraud worth understanding because no privacy setting stops it.

“All-green” fraud is when you are the security system. The attacker doesn’t breach the bank. They manipulate you into authorizing a payment yourself. You authenticate from your own device. You’re in your usual location. Standard fraud controls don’t flag anything because technically everything looks correct. The customer is being deceived — not hacked.

AI has made this industrial. Voice cloning for fake family emergency calls. AI-generated personas for romance fraud that runs for months. Authorized Push Payment scams where the victim moves their own money to a criminal account.

The new Nacha rules that came into effect March 20, 2026, require financial institutions to detect criminal payee accounts before funds clear — across the full payment journey, not just at origination. But the most reliable behavioral signal for this fraud type is hesitation. A customer who pauses before a large transfer. Someone on the phone while moving money. Systems that detect those subtle behavioral signals can flag the transaction before it completes.

No privacy checklist stops this. Knowing the pattern is the protection.

People Also Ask – PAA’s

Is it safe to share my bank login with AI apps?

No. Giving any third-party app your actual bank username and password creates serious risk. Legitimate financial apps connect through read-only APIs like Plaid or Finicity. They never ask for your login directly. If an app asks for your bank password — look for a different app.

How do I protect my financial data in the AI era?

Use apps that connect through read-only APIs only. Check for SOC 2 Type II certification. Confirm in the privacy policy that your data is not used to train external AI models. Review and revoke OAuth permissions you’ve granted to third-party tools. If an AI system makes a financial decision about you, request specific reasons — not a generic model output.

What is PII protection in AI financial apps?

PII is your personally identifiable information — name, address, account numbers, transaction history, anything that identifies you individually. Responsible platforms collect only what they need, store it with strong encryption, never share it for model training without explicit consent, and give you a clear way to review or delete it.

What encryption standard should I look for?

256-bit AES encryption at rest and in transit as a baseline. The stronger standard is persistent encryption — data stays encrypted as it moves through multiple systems, not just while sitting in one place. SOC 2 Type II certification verifies that an independent auditor confirmed those practices over time.

Is DeFi safer for privacy than traditional banking?

Different risks, not fewer risks. DeFi removes the central database that institutional attackers target. But it also removes the regulatory protections, consumer recourse, and audit standards that traditional banking provides. Data consent practices in DeFi vary enormously across platforms and remain largely unregulated. For most consumers, the privacy risks in DeFi come from wallet exposure and smart contract vulnerabilities — not institutional data breaches.

What Marcus Did

He never found the privacy settings page. He still doesn’t know what his bank is doing with his transaction data.

But he started asking the same three questions before connecting any new app: read-only API or credential sharing? SOC 2 certified? Does the privacy policy say no model training on customer data?

His bank’s AI feature is still running. He can’t control that. What he can control is which other tools he connects to his account, and what he checks before connecting them.

Financial AI data privacy in 2026 is imperfect on the best day. The tools are useful. The risks are real. The explanations from companies are inconsistent and often written for legal compliance rather than human understanding.

You can’t fix the system. But you can ask better questions before you let it in.

About the Author Marcus Delray covers AI in financial services, cybersecurity, and fintech ethics. He has written on data privacy, consumer financial protection, and regulatory compliance for nine years.

Disclaimer: General information only. Not financial, legal, or security advice. All incidents, regulatory requirements, and statistics are based on available public data as of May 2026. Verify current requirements with a licensed professional. The author and publisher accept no liability for outcomes based on this content.